Claude Code without Claude

It has been another patchy week for Anthropic with rolling outages of Claude Code and Claude Desktop hitting users all week. Claude Code (CC) is so baked into our ways of working (we're an AI Native agency after all) that not having access to these models can cause a significant slow down and is admittedly an operational risk.

Following on from a previous article on Why a Multi-AI Strategy is a Good Idea we thought it would be a good idea to show how exactly we keep CC running when Anthropic is down. Now it's true that we could switch to Github CoPilot, but this misses out on some of the really nice functionality baked into CC that makes working with it so much fun. So we've been experimenting with using OpenRouter to substitute in different LLMs and keep CC up and running.

OpenRouter

OpenRouter is a provider aggregator (sometimes called an LLM gateway). They provide a simple interface to purchase credits which can then be used on a huge variety of models. This option makes a lot of sense for us because it allows easy switching between various models to trial them out on projects and products.

The OpenRouter business model works by charging a simple and flat 5.5% fee on top of the provider's base rate. We like this because:

-

It keeps us very aware of the cost of various providers and allows us to weigh up the costs of different providers

-

It keeps us very focussed on how much we are spending on LLM usage. This worry largely disappears when you have a subscription like Claude Pro or Max, but AI Products and Apps aren't able to access these subscriptions (instead having to use the developer APIs which are charged on a per token basis).

-

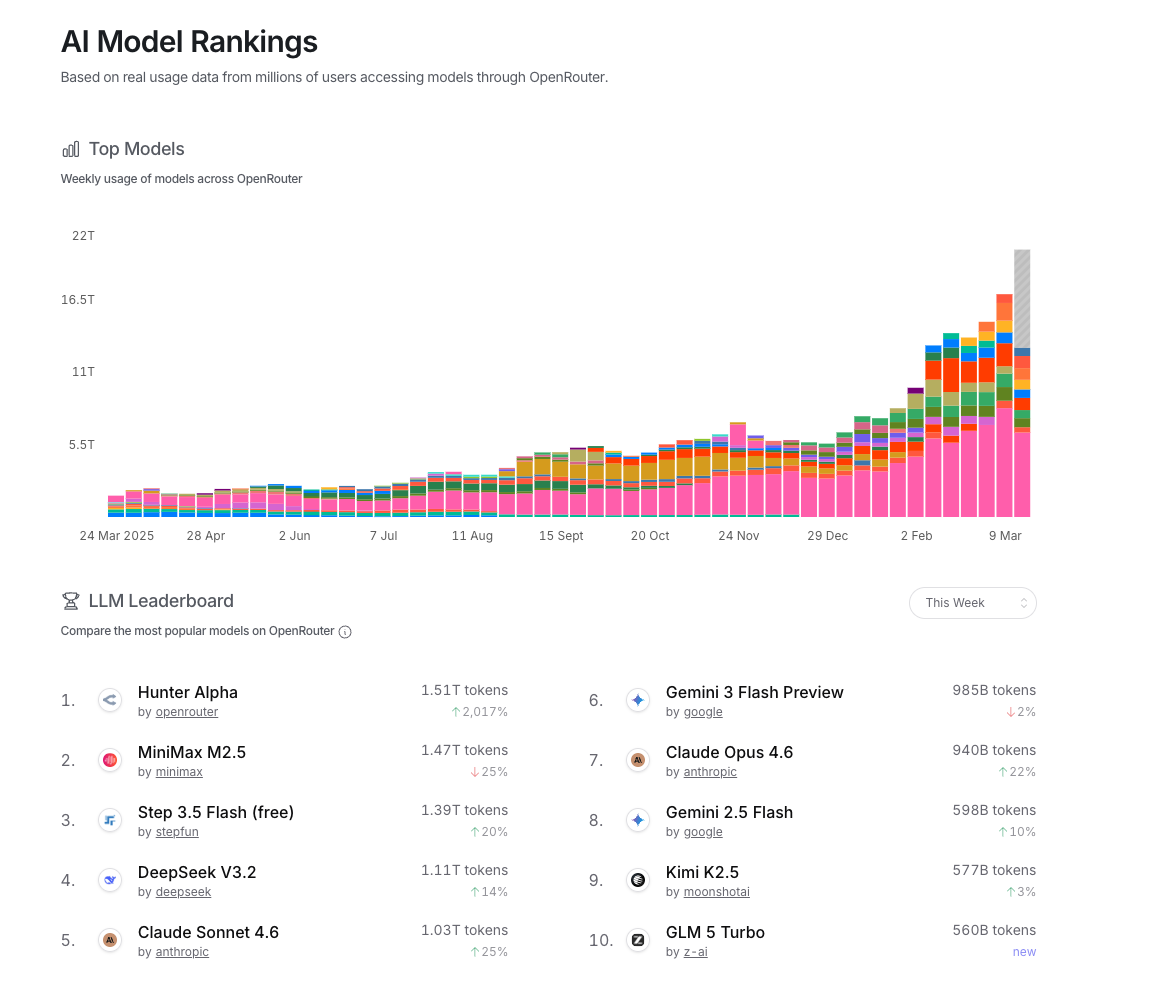

It gives great insight on the AI landscape and which models are popular right now. For example here's the current leader board.

Claude and OpenRouter

A slightly unknown feature of CC is that you can run it with other model providers. This means you can run it on local models, OpenRouter, LiteLLM etc. Here are the instructions for Open Router:

- Add credit to your OpenRouter account (start with $10 say)

- Create an API key

- Add the following environment variables to your shell. As I still primarily use my Anthropic subscription for Claude Code. I only do the following when I switch over to OpenRouter:

# Move to your project directory

cd your-ai-project/

# Export environment variables:

export OPENROUTER_API_KEY="<your-openrouter-api-key>"

export ANTHROPIC_BASE_URL="https://openrouter.ai/api"

export ANTHROPIC_AUTH_TOKEN="$OPENROUTER_API_KEY"

export ANTHROPIC_API_KEY="" # Important: Must be explicitly empty- Now just launch

claudeas you usually would and specify the model you want to run it with:

# Fire CC up with a specific model

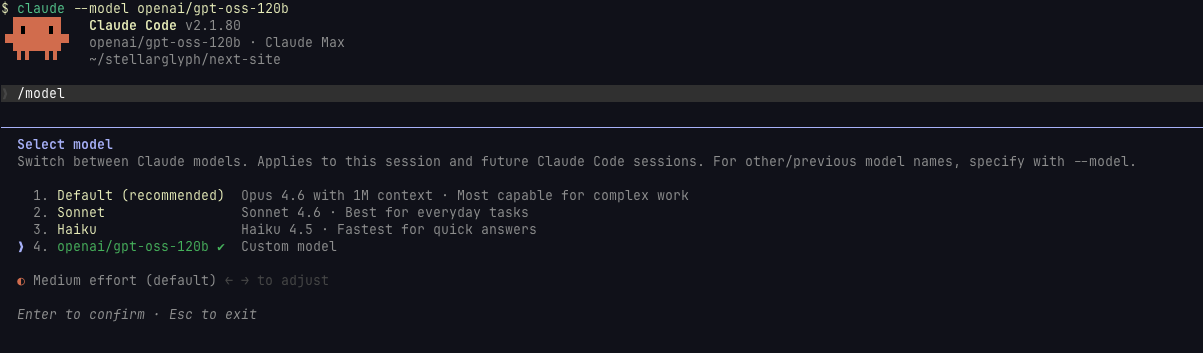

claude --model openai/gpt-oss-120b

# Or when you are within CC choose a model then

claude

/model openai/gpt-oss-120b

In both instances you should see the following:

- Now if there are specific models you have a preference for using, you can specify these as defaults in your environment. You can export the following:

export ANTHROPIC_DEFAULT_OPUS_MODEL="minimax/minimax-m2.5"

export ANTHROPIC_DEFAULT_SONNET_MODEL="xiaomi/mimo-v2-pro"

export ANTHROPIC_DEFAULT_HAIKU_MODEL="stepfun/step-3.5-flash:exacto"

export CLAUDE_CODE_SUBAGENT_MODEL="google/gemin:i-3-flash-preview"Wrap Up

What's become pretty clear is that CC is specifically optimised to be run with Anthropic models and so using other models often produces worst results and sometimes doesn't work at all. Perhaps an even bigger issue is how bloated the CC context window is - quite simply each turn of work consumes so many more tokens than I'd expect (even for simple tasks).

Right now we're also experimenting with running a lightweight agent instead of CC when using OpenRouter ... more on that in a future blog.

This workaround though has been a life saver to allow us to continue working on other models and has meant that along with the multi-AI strategy (having subscriptions to both Raycast and Github CoPilot) means we can continue our work. No longer does an Anthropic or OpenAI outages result in a down-tools-and-head-to-the-pub scenario.