Why A Multi-AI Strategy Is A Good Idea

It was not a great start to March for Anthropic....

Anthropic's LLMs and Claude Code Agent are the stickiest software I have ever used. My usage of these tools has risen significantly in the last 6 months and have given me the ability to dramatically increase my productivity. However, the recent outages show what can happen when we become to dependent on these tools and made us reflect on our own AI strategy.

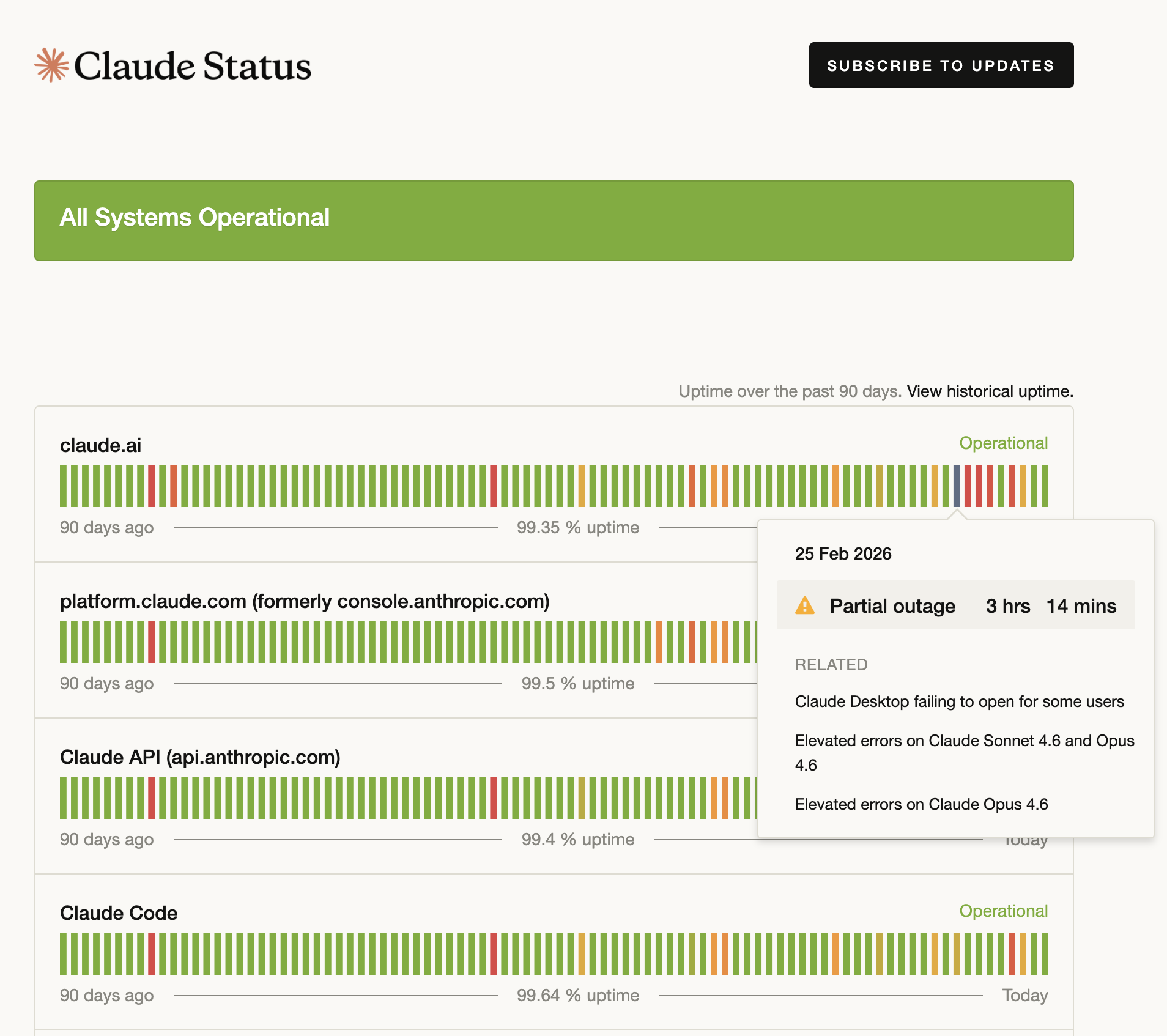

The outages

Anthropic had rolling outages across all their offerings from 25/02/2026 to 04/03/2026. In fact, many customers couldn't even log into their accounts during this time. The next time there is a problem you can see the current status page here. If you scroll back through time, you can see actually, there are outages and problems often, in fact February alone had 58 incidents. Below is a list of some of these so you can get a feel for the types of failures the various parts of the platform suffer from:

- Elevated errors on Claude Opus 4.6

- Elevated errors on claude.ai

- Claude Desktop login issues

- Outage in usage reporting

- Claude Code showing "JSON Parse error: Unexpected EOF" and writing excessive files on Windows

- Claude Desktop failing to open for some users

- Elevated error rates across multiple models

- Claude's responses not appearing in Cowork

This isn't the full list of errors, if you are interested you can go and check these on the status page.

This isn't a dig at Anthropic

Despite the list I've provided, this isn't a dig at Anthropic. Actually, what the company has achieved with it's models and agents over the last 3 months has been phenomenal. It now leads by a huge margin over Open AI for software engineering work.

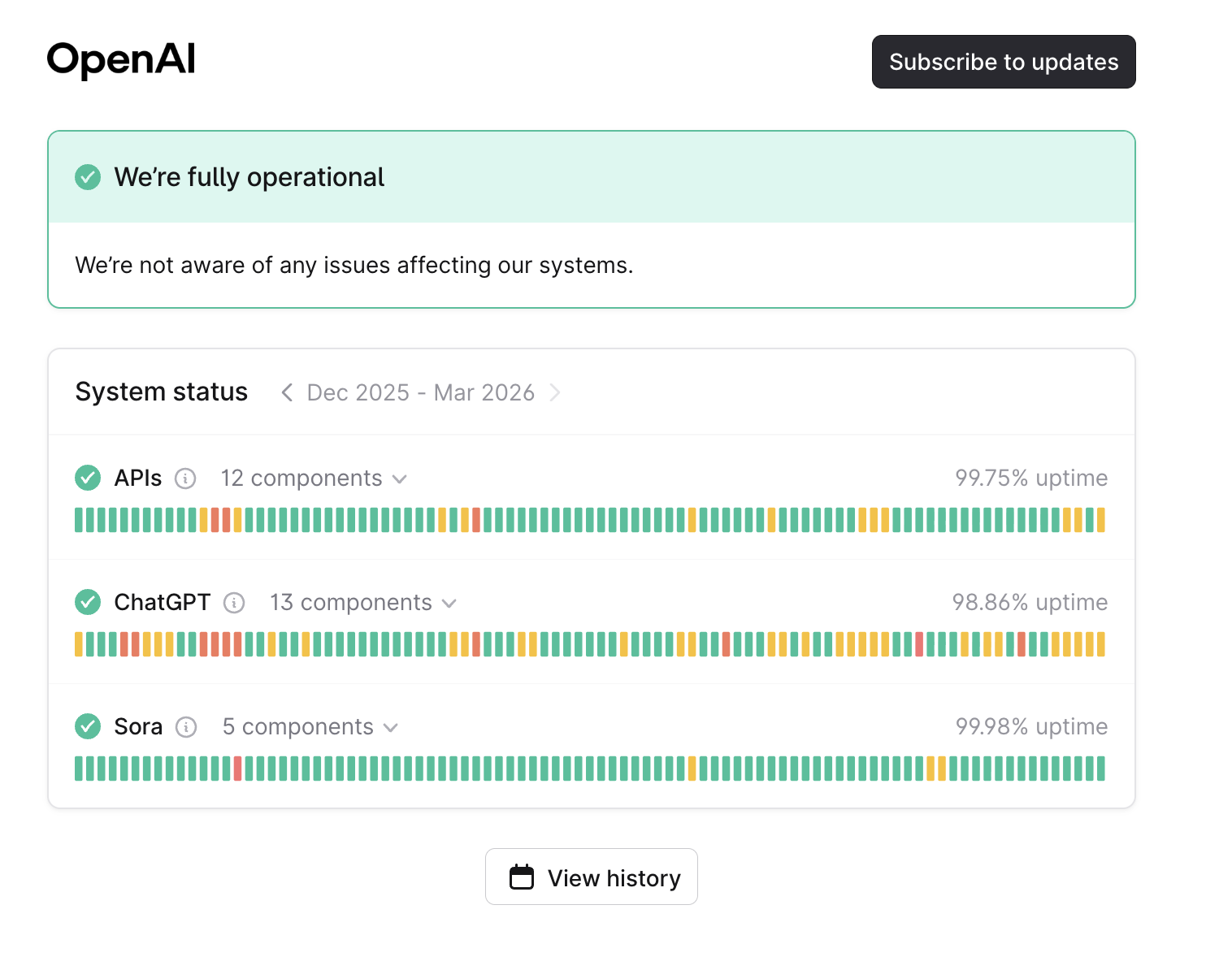

Instead what it demonstrates is how increased usage of AI also makes us even more vulnerable to service interruption. The reality of the situation is that all AI companies are moving fast and breaking things in the rush to get ahead of the competition and continuously improve their products. This means that we should absolutely expect these systems to be offline for a day or more. In the interest of fairness, here's the same graphic from OpenAI:

How to deal with this risk?

Liberate Prompts and Context

Both Open AI and Anthropic have built beautiful walled gardens where the more information and context you give them, the better the responses. You can see the issue here, if you load everything into their systems it means when their systems go down, you lose access to all your data too. Now remember behind all the magic are two fundamental concepts that fuels this power.

- Prompts: These are the instructions you give the model

- Context: This is the additional information you provide which allows a model to augment it's training.

If you are able to host these two information sources your self, it means you don't have to down tools again if a service goes down. You can carry on working with another LLM service, and though this will likely give you different responses to your main provider (for example Anthropic, OpenAI or Google), it will at least allow you to continue working.

Multiple Provider Strategy

Because our work is at the centre of the AI space we have multiple (and sometimes overlapping) subscriptions to services that aggregate access to many different providers. We have AI subscriptions to both Github CoPilot and RayCast AI. With both of these subscriptions I could switch to various other providers and models (in this case, I went back to OpenAI) and was able to continue doing my work without too much interruption. It also gave me an opportunity to try some of the other model providers such as Mistral and Google's Gemini. Unfortunately, I found that most of them were sub-par compared to Anthropic's models for the type of work that I do.

Recently there has been a rise in AI provider aggregators, these services provide a single portal to access multiple providers. Services like OpenRouter charge a flat mark-up on top of the base token price and so make it easy to understand how much additional you are paying for their service. An alternative strategy is to sign up for Pay As You Go accounts or smaller subscriptions with another provider. These have lower standing charges and so allow you to flexibly switch models as the need arises.

Local Models

Open source local models can be run on your own infrastructure of hosted in the cloud. The benefit here is that you can control all the infrastructure and service and also as a side benefit, have complete control of your data. For the moment, open source models aren't as good as the best models from industry leaders. However, they are making incredible leaps. In the future, these models will be good enough for many tasks and we will perhaps see businesses switch to using self-hosted models for a lot of work, falling back to the more expensive closed-source models only for the most complicated work.

So where to next

The reality is that we are still at the very start of the AI boom. As these providers improve and develop their products they will no doubt have more outages and failures than we are used to from more mature technologies. Therefore the onus for maintaining business continuity falls to us. There's a number of strategies here that we're adopting at StellarGlyph to ensure we aren't overly dependent on 1 provider or model as the work we do is so dependent on AI partnership. Reach out to us if you'd like help creating a resilient AI strategy for your business which ensures that if Anthropic goes down you business can continue to operate.